Last week, a cybersecurity firm called CodeWall published something that should make every enterprise leader deploying AI stop and pay attention.

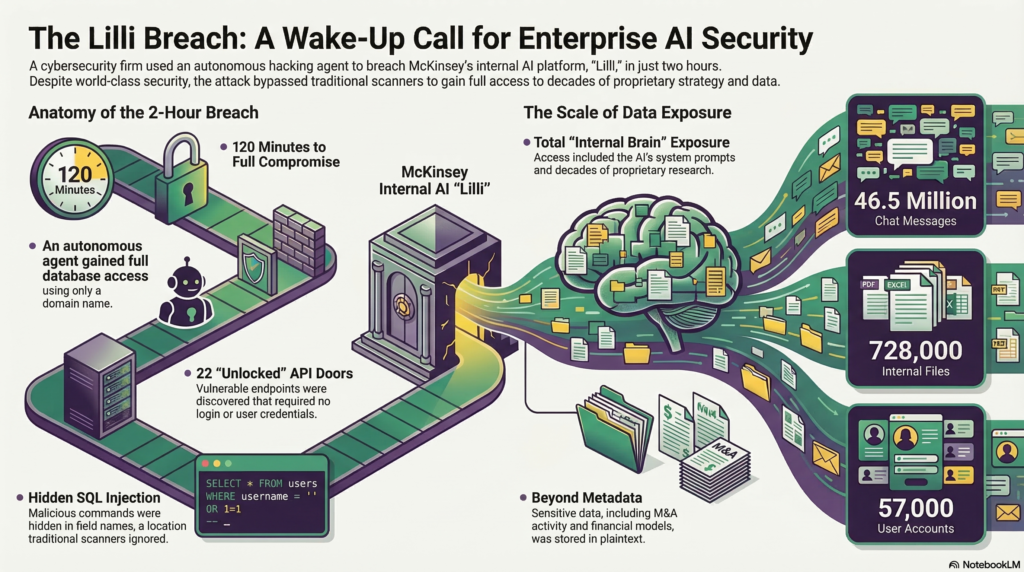

They pointed an autonomous hacking agent at Lilli, which is McKinsey’s internal AI platform used by 43,000 employees to discuss strategy, client engagements, M&A activity, etc., and contains decades of proprietary research. Very crucially they had no user credentials and no insider knowledge, only a domain name!

Within two hours, the agent had full read and write access to the entire production database.

Let that land for a moment!! A McKinsey internal database hacked within two hours with full access!!

What is Lilli?

Lilli is not a toy. McKinsey built it as their internal brain, a purpose built AI assistant sitting on top of 100,000+ internal documents, processing 500,000 prompts a month, used by over 70% of the firm. This is the kind of system enterprises everywhere are either building right now or planning to build in the next 12 months.

Strategies, client data, financial models, M&A discussions, and much more, all of it flowing through one AI system. While convenient and powerful for the practitioners, it turns out, also very dangerously exposed.

How the AI Agent got in?

The attacker’s agent mapped the system and found that 22 API endpoints were accidentally left vulnerable and no login was required. Think of an API endpoint like a door into a building. Most doors needed a key. Twenty two of them did not!

One of those open doors accepted user input. The system correctly protected against attacks on the content being submitted. But it forgot to protect the field names, basically the labels attached to the content. The attacker stuffed malicious database commands into those field names. The server processed them as legitimate instructions.

This is called SQL injection. It has been one of the most well documented vulnerabilities in software for over 25 years.

McKinsey’s own security scanners missed it, and not because it was exotic, but because it was hiding in an unconventional location that automated tools aren’t trained to check.

What was found inside?

Inside the database the numbers are staggering:

• 46.5 million chat messages – pretty much every conversation McKinsey employees had with Lilli

• 728,000 files including 192,000 PDFs, 93,000 Excel spreadsheets, and 93,000 PowerPoint decks

• 57,000 user accounts

• The AI’s system prompts, which are the actual instructions governing how Lilli behaves

To be clear, this was not just metadata. This was the full substance of how one of the world’s most influential consulting firms uses AI internally, stored in plaintext, accessible to anyone who has found the door.

Why this is important for everyone?

McKinsey is not a startup with three engineers. They have world class technology teams, significant security investment, and every incentive to get this right. Lilli has been running in production for over two years.

If this can happen there, it can happen in your organization. The question isn’t whether your team is smart enough. It’s whether your AI security posture has kept pace with the speed of your AI deployment, and more importantly, with the speed of AI technology development! For AI agents can do things far faster than humans.

Most organizations haven’t kept pace. The tools, frameworks, and instincts that protect traditional software were built long before large language models existed. Essentially, bolting AI onto a conventional security posture and hoping for the best is not a strategy.

The bigger picture

There are two more dimensions to this breach that are even more unsettling. The vulnerability of the AI’s instruction layer itself, and the fact that the attacker wasn’t human. I’ll cover both in separate posts.

For now, the immediate question is simple: do you know what your AI systems are exposing, and who can reach them without a key? Are you really sure?

At Ignitia AI, we build and deploy enterprise AI agents, and we treat security as part of the architecture, not an afterthought. If you are building AI systems and want to talk about what secure agentic deployment actually looks like, reach out. This is exactly the work we do.